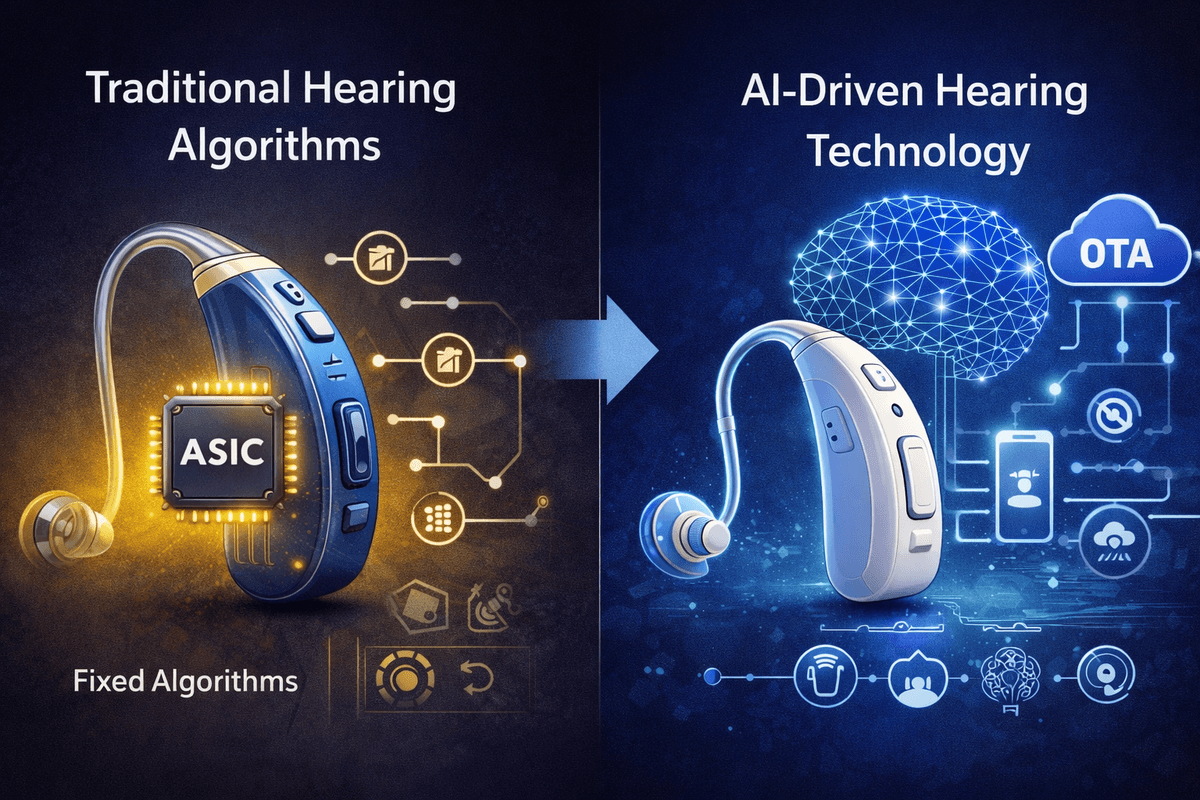

The hearing aid industry stands at an inflection point. For decades, the market has been dominated by hardware-centric solutions built on proprietary Application-Specific Integrated Circuits (ASICs)—closed systems where algorithms are etched permanently into silicon. Today, a fundamental paradigm shift is underway. Led by pioneers like Lyratone, the industry is transitioning from Hardware-Defined Hearing (HDH) to Software-Defined Hearing (SDH) and, ultimately, to AI-Defined Hearing (AIDH). This transformation promises not merely incremental improvement but a complete reimagining of how hearing assistance is developed, delivered, and experienced.

Traditional hearing aids operate on what engineers call "black box" ASIC architectures. These proprietary chips, supplied by manufacturers like Onsemi, integrate signal processing functions directly into the hardware. While this approach has delivered reliable performance for decades, it imposes severe constraints on innovation.

The primary limitation is iteration velocity. Because algorithms are hardware-encoded, any meaningful upgrade requires a complete chip redesign—a process that typically spans two to three years. Manufacturers are locked into lengthy development cycles, unable to respond quickly to emerging research or user feedback. This rigidity is particularly problematic in an era when consumer electronics evolve on monthly, not yearly, timelines.

Furthermore, traditional architectures offer limited customization. Audiologists can adjust parameters within pre-defined ranges, but the core processing logic remains immutable. For manufacturers seeking to differentiate through unique acoustic signatures or specialized form factors like Open Wearable Stereo (OWS) or cartilage conduction devices, the ASIC model presents a frustrating barrier. The chip dictates what is possible; innovation must fit within its constraints.

Cost compounds these challenges. Low-volume production of specialized ASICs drives Bill of Materials (BOM) costs to levels that keep hearing aids inaccessible to millions who need them. The economics of hardware-defined architectures have, in essence, restricted the hearing aid market to a narrow demographic willing and able to pay premium prices.

The Software-Defined Revolution

Lyratone's Software-Defined Hearing architecture fundamentally dismantles these constraints. By porting core signal processing algorithms—Wide Dynamic Range Compression (WDRC), noise reduction, feedback management—to a flexible software layer running on general-purpose System-on-Chip (SoC) platforms, SDH decouples hearing function from hardware limitations.

This architectural shift delivers immediate practical benefits. Lyratone's LyraOS platform supports 32 to 128 adjustable frequency channels for WDRC, significantly exceeding the precision available from many traditional solutions. System latency has been optimized to 7–9 milliseconds, comfortably below the FDA's 15ms threshold for medical-grade performance. AI-driven noise reduction achieves up to 12dB suppression, nearly double the industry average of 6–8dB.

The most transformative aspect, however, is iteration speed. Because algorithms exist as software, they can be updated Over-the-Air (OTA) in weeks rather than years. Bug fixes, performance optimizations, and entirely new features reach users through routine smartphone updates. This transforms the hearing aid from a static medical device into an evolving platform that improves throughout its lifecycle.

Economic implications are equally significant. By leveraging mass-market consumer SoCs produced at 12nm or 14nm process nodes, Lyratone reduces BOM costs to approximately one-fifth or even one-tenth of traditional ASIC-based solutions. This cost structure makes medical-grade hearing assistance viable at price points previously associated with consumer earbuds, directly addressing the accessibility crisis in hearing health.

AI-Defined Hearing: The Self-Learning Auditory System

Building upon the SDH foundation, AI-Defined Hearing represents the next evolutionary leap. Where SDH makes hearing algorithms programmable, AIDH makes them intelligent—capable of learning, adapting, and personalizing in real-time.

Lyratone's AIDH architecture implements a Cloud-Edge-Device synergy that transforms the hearing aid into an AIoT platform. At the edge, embedded AI processors perform real-time inference for immediate environmental adaptation. The cloud layer serves as a "global brain," aggregating anonymized data from hundreds of thousands of users to train increasingly sophisticated models. These improvements are then pushed to devices through OTA updates, creating a continuous feedback loop of collective learning and individual benefit.

The practical manifestations are profound. Rather than switching between fixed environmental presets ("Quiet," "Restaurant," "Outdoor"), AIDH systems dynamically adjust compression curves, noise reduction aggression, and directional microphone patterns based on real-time acoustic analysis and user behavior patterns. The device learns that a particular user prefers more aggressive low-frequency reduction in crowded spaces, or that certain environmental signatures correlate with the user's regular commute.

Self-fitting capabilities advance dramatically under AIDH. Lyratone's platform already enables users to perform pure-tone audiometry via smartphone, generating accurate hearing thresholds across 250Hz to 4000Hz. AI enhancement means these fitting processes become predictive and conversational—anticipating user needs, explaining adjustments in natural language, and refining prescriptions based on ongoing usage feedback rather than single-point testing.

Clinical Validation and Commercial Reality

These architectural advantages are not theoretical. Lyratone's solutions have secured both US FDA OTC registration and China NMPA Class II medical device certifications, demonstrating that software-defined approaches meet rigorous clinical standards. The company's technology self-sufficiency rate exceeds 90%, covering algorithms, chip application, hardware design, and fitting systems—ensuring deep customization capability without third-party dependencies.

Form factor flexibility illustrates the practical power of SDH/AIDH. The same underlying platform powers traditional Receiver-in-Canal (RIC) and Behind-the-Ear (BTE) medical devices, fashionable True Wireless Stereo (TWS) earbuds, and emerging categories like cartilage conduction aids—the "third auditory pathway" that transmits sound through ear cartilage vibrations. This versatility enables manufacturers to address diverse clinical indications and lifestyle preferences from a unified technical foundation.

Hearing as a Service: The Future Landscape

Looking forward, the hearing aid is evolving from product to service. The "Hearing as a Service" (HaaS) model enabled by SDH and AIDH architectures treats auditory assistance as an ongoing relationship rather than a one-time transaction. Users subscribe to continuous improvement—receiving not just hardware but perpetually upgrading intelligence, remote audiologist support via telehealth platforms, and integration with broader health monitoring ecosystems.

This transition aligns hearing health with broader trends in personalized medicine and preventative care. Future AIDH platforms will likely incorporate biometric monitoring, fall detection, cognitive health indicators, and seamless connectivity with smart home systems. The ear becomes not merely a site for amplification but a portal for comprehensive wellness management.

Conclusion

The comparison between AI-defined and traditional hearing algorithms is ultimately a choice between constraint and possibility. Hardware-defined architectures, while proven, cannot deliver the accessibility, adaptability, and continuous improvement that modern users demand. Software-defined and AI-defined approaches break these boundaries—lowering costs by an order of magnitude, accelerating innovation cycles from years to weeks, and transforming hearing aids from static devices into intelligent, evolving platforms.

Lyratone's analysis of this landscape suggests a clear trajectory: the future of hearing health belongs to architectures that treat software and AI as foundational, not supplementary. For manufacturers, audiologists, and the hundreds of millions worldwide with hearing impairment, this transition cannot arrive soon enough.